Whats up whats uppp!

You're getting this email because you subscribed to Aidstation.

If you're new here, welcome. I share what I'm using, what I'm learning, and what's happening in AI every week.

I meant it when I said I'd help

The last time I offered to help people get unstuck, I got more replies than any newsletter I've ever sent. And the conversations were genuinely great.

So I'm doing it again.

Whether you replied last time or not, I want to hear from you. Tell me what you're working on, what you're trying to build, where you're stuck. Maybe you want to set up Claude Code but don't know where to start. Maybe you have an automation idea but don't know which tools to use. Maybe you just need someone to tell you if your approach makes sense.

Whatever it is, reply. I'll personally help you figure it out. The tools, the approach, the setup. And if I see patterns in what people are asking, I'll turn the best ones into full breakdowns for everyone.

Claude Code

A few of you replied asking about Claude Code specifically. How to set it up, whether to use branches, how to handle agent teams, all of it.

So here's what actually matters.

Plan before you build. This is the biggest difference between people who get results and people who don't. Before you touch any code, have your agent write a plan. A task breakdown, a PRD, whatever. Split everything into small, clear steps. The clearer the plan, the better the output.

Branches and commits. Commits are save points. Every time something works, commit it. If things break later, you roll back. Branches let you experiment without touching your live code. Create a branch, work on it, merge when it works, delete when it doesn't. Your production site is never affected. If you're shipping anything people use, this is non-negotiable.

Agent teams, yes, use them. Multiple agents in parallel is faster than one doing everything sequentially. But watch where they start. Sometimes you'll catch one heading in the wrong direction in the first 30 seconds. Catch it early and you save yourself a ton of wasted tokens and bad code. And always start from a clear plan. Agents without a plan burn through tokens figuring out what they're supposed to do.

Stop stacking tools. This is the one nobody wants to hear. I see people adding every new plugin, every MCP server, every skill they find online. That's not helping you. It's making your setup heavier and your context noisier. Pick your stack, go deep with it, and resist the urge to install something new every week. The best setups I've seen are simple ones.

That's the short version. I put together a full guide that goes deeper, which skills to use, how to structure your files, the exact workflow I follow, and the mistakes that waste the most tokens. If you want it, reply and I'll send it over.

If you're not a developer, learn Cowork

I've been teaching family and friends how to use Claude Cowork. It's a feature most people don't know exists, and it completely changes how you interact with AI.

Instead of asking Claude a question and getting an answer, Cowork lets you bring it into your actual task. You're working on something together, in real time. It's the closest thing to having a smart teammate sitting next to you.

If you're not building software but you want to get real value out of AI, this is where to start. Reply and I'll send you a full guide on how to set it up and get the most out of it.

What caught my attention this week

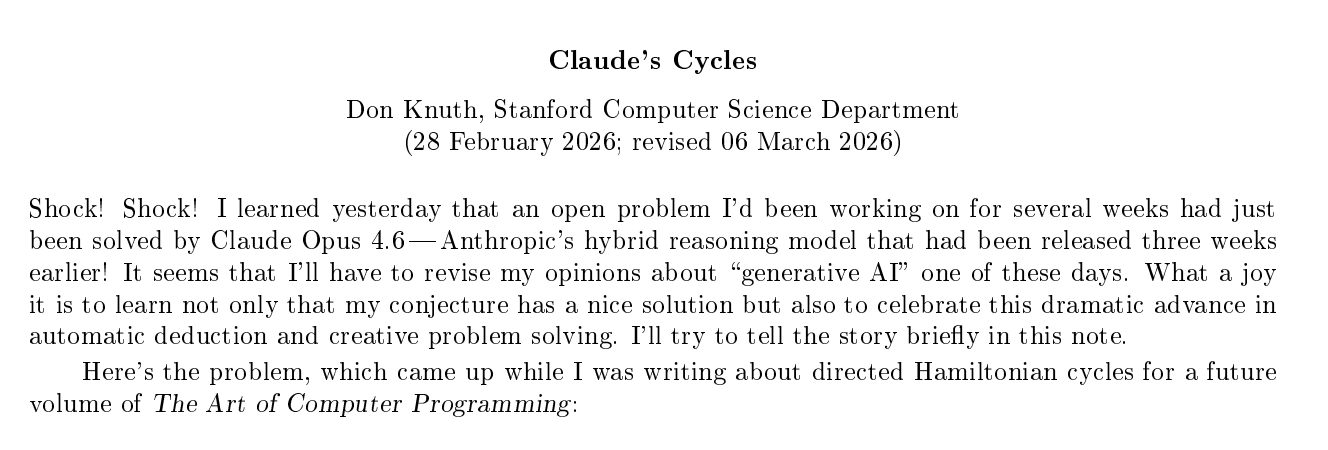

Don Knuth is in shock and you should too.

If you don't know Don Knuth, he's the guy who's been writing The Art of Computer Programming since 1962. Turing Award winner. One of the most important computer scientists who ever lived.

This week he published a 5-page paper documenting how Claude Opus 4.6 solved a math problem he'd been stuck on for weeks.

Filip Stappers then confirmed the solution worked for all odd values of m between 3 and 101.

The same model that solved what a Turing Award winner couldn't is the same one you're using to build landing pages and write emails. The gap between what most people use AI for and what it can actually do is massive.

And it's not about paying for a better plan or unlocking a secret feature. It's about how you prompt it, how much context you give it, and whether you push it past the first answer.

Most people treat these models like search engines. Ask a question, get an answer, move on. The people getting insane results are the ones treating them like collaborators, going back and forth, challenging the output, giving it more context, and letting it reason through the problem.

GPT-5.4 is out

OpenAI launched GPT-5.4 with three variants: Thinking, Pro, and the Codex API open to developers.

The main updates:

47% fewer tokens on many tasks

1M token context window

Native Computer Use mode where the model controls your computer directly

Inside ChatGPT, Thinking mode now shows its reasoning outline and lets you adjust the prompt while it's still responding.

The token efficiency matters more than the benchmarks. If you're building on top of these models, 47% fewer tokens is real money saved.

Google launched a CLI for your AI agents to manage your entire Workspace

Google released @googleworkspace/cli, a Rust binary called gws with 50+ agent skills and an MCP server built in. Your AI agents can now read and write to Gmail, Drive, Calendar, Sheets, Docs, and Chat directly from the terminal.

The workflow is like git. You run gws pull and it converts a Google Sheet into a folder with editable files. Your agent makes the changes. You run gws push and it applies them back. Same thing for Docs, Slides, whatever.

This is a big deal because it turns your entire productivity stack into something agents can operate. Not through hacks or screen scraping, through an official API designed for it. Schedule meetings, draft emails, update spreadsheets, organize Drive, all things an agent can now do without you opening a browser.

Check it out: github.com/googleworkspace/workspace-cli

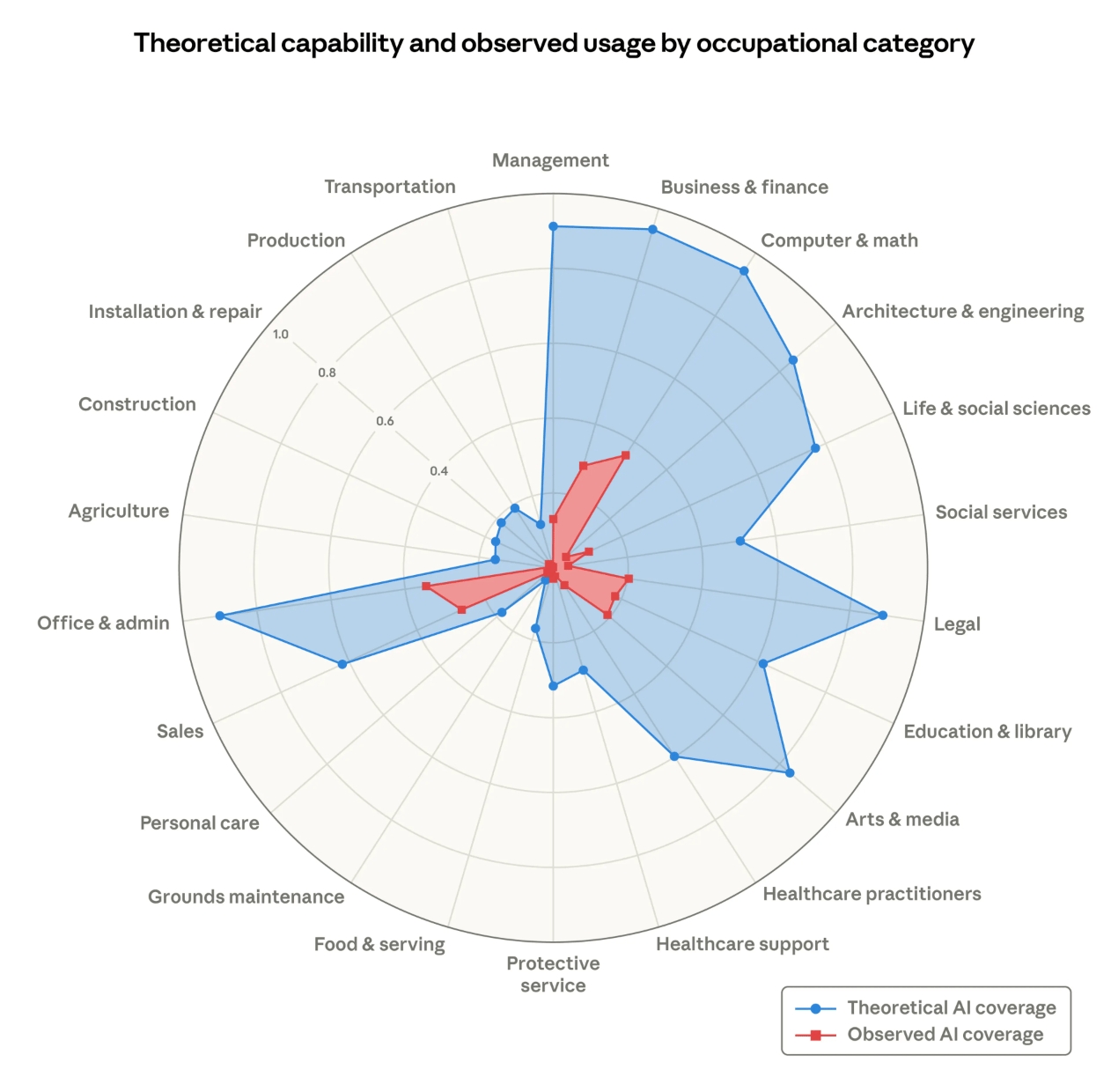

Anthropic published research on how AI is actually affecting jobs

Anthropic's researchers built a new way to measure AI's real impact on jobs.

Instead of just asking "could AI theoretically do this task," they measured what people are actually using Claude for at work.

Computer and math jobs have 94% theoretical AI exposure but only 33% actual usage. Most of the workforce hasn't been touched yet. The bottom 30% of workers by exposure, cooks, mechanics, bartenders, lifeguards, have zero AI coverage.

Where there is an effect, it's not layoffs. It's a slowdown in hiring younger workers. Occupations with high AI exposure showed a 14% drop in job-finding rates for people aged 22 to 25.

Full research: anthropic.com/research/labor-market-impacts

Quick hits

Cursor launched Automations - Coding agents that trigger themselves from a push, a Slack message, a timer, whatever. No human typing a prompt. The "prompt-and-monitor" loop that eats up your day is starting to die. https://cursor.com/blog/automations

mcp2cli - If you're using multiple MCP servers, you're burning thousands of tokens per turn just loading tool schemas. This CLI flips it, the agent discovers tools on demand instead of loading everything upfront. 96-99% fewer tokens in real benchmarks. Works with Claude Code, Cursor, any agent. github.com/knowsuchagency/mcp2cli

That's it for this week.

If you're building something with AI and want help, reply. That's literally what this newsletter is for.

-Ed

Did you enjoy this newsletter? Check out what we're building at Kleva.

And if someone forwarded this to you, you can subscribe here: